The APM syllabus contains the learning outcome:

‘Advise on the common mistakes and misconceptions in the use of numerical data used for performance measurement’.

The mistakes and misconceptions can be divided into two causes:

- The quality of the data: what measures have been chosen and how has the data been collected?

- How has the data been processed and presented to allow valid conclusions to be drawn?

Inevitably, these two causes overlap because the nature of the data collected will influence both processing and presentation.

The collection and choice of data

What to measure?

What data to measure is the first decision and the first place where wrong conclusions can be either innocently or deliberately generated. For example:

- A company boasts about impressive revenue increases but downplays or ignores disappointing profits.

- A manager wishing to promote one of two mutually exclusive projects might concentrate on its impressive IRR whilst glossing over which project has the higher NPV.

- An investment company with 20 different funds advertises only the five most successful ones.

Not only might inappropriate amounts be measured, but they might be deliberately undefined. For example, a marketing manager in a consumer products company might report that the company’s new toothbrush is reported by users to be 20% better.

But what’s meant by that statement? What is ‘better’? Even if that quality could be defined, is the toothbrush 20% better than, for example, using nothing, competitors’ products, the company’s previous products, or better than using a tree twig?

Another potential ruse is to confuse readers is with relative and absolute changes. For example, you will occasionally read reports claiming something like eating a particular type of food will double your risk of getting a disease. Doubling sounds serious but what if you were told that consumption would change your risk from 1 in 10m to 1 in 5m? For most people doubling the risk does not look quite so serious now. The event is still rare and the risk very low.

Similarly, if you were told that using a new material would halve the number of units rejected by quality control, you might be tempted to switch to using it. But if the rate of rejections is falling from 1 in 10,000 to 1 in 20,000, the switch does not look so convincing – although it would depend on the consequences of failure.

Sampling

Many statistical results depend on sampling. The characteristics of a sample of the population are measured and, based on those measurements, conclusions are drawn about the characteristics of the population. There are two potential problems:

- For the conclusions to be valid, the sample must be representative of the population. This means that random sampling must to be used so that every member of the population has an equal chance of being selected for the sample. Other sorts of sampling are liable to introduce bias so that some elements of the population are over or under represented so that false conclusions are drawn. For example, a marketing manager could sample customer satisfaction only at outlets known to be successful.

- Complete certainty can only be obtained by looking at the whole population and there are dangers in relying on samples which are too small. It is possible to quantify these dangers and, in particular, you need to know information like to a 95% confidence level, average salaries are $20,000 ± 2,300. This means that, based on the sample, you are 95% confident (the confidence level) that the population’s average salary is between $17,700 and $22,300 (the confidence interval). Of course, there is a 5% chance that the true average salary lies outside this range. Conclusions based on samples are meaningless if confidence intervals and confidence levels are not supplied.

The larger the sample the greater the reliance that can be placed on conclusions drawn. In general, the confidence interval is inversely proportional to the square size of the sample. So, to halve the confidence interval the sample size has to be increased four times – often a significant amount of work and expense.

More on small samples

Consider a company that has launched a new advert on television. The company knows that before the advert 50% of the population recognises its brand name. The marketing director is keen to show to the board that the advert has been effective in raising brand recognition to at least 60%. To support this contention a small survey has been quickly conducted by stopping 20 people at ‘random’ in the street and their brand recognition was tested. (Note that this methodology can introduce bias: which members of the population are out and about during the survey period? Which street was used? What are the views of people who refuse to be questioned?)

Even if the advert were completely ineffective, it can be shown that there is a 25% chance that at least 12 out of the 20 selected will recognise the brand. So, if the director didn’t get a favourable answer in the first sample of 20, another small sample could be quickly organised. There is a good chance that by the time about four surveys have been carried out one of the results will show the improved recognition that the marketing director wants. It’s rather like flipping a coin 20 times – you intuitively know that there is a good chance of getting an 8:12 split in the results. If instead of just 20 people being surveyed, 100 were asked, then the chance of getting a recognition rate of at least 60% would be only 1.8%. (Note: these results make use of the binomial distribution, which you do not need to be able to use.)

In general, small samples:

- Increase the chance that results are false positives

- Increase chance that important effects will be missed.

Always be suspicious of survey results that do not tell you how many items were in the sample.

Another example of a danger arising from small samples is that of seeing a pattern where there is none of any significance.

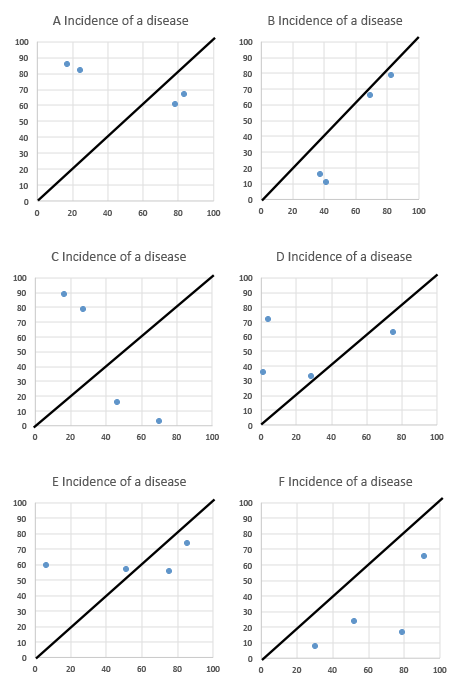

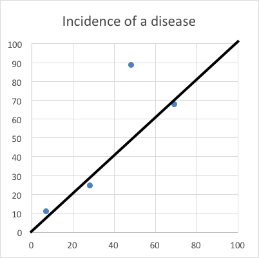

Imagine a small country of 100km x 100km. The population is evenly distributed and that four people will suffer from a specific disease. In the graphs below, the locations of the sufferers have been generated randomly using Excel and plotted on the 100 x 100 grid. These are actual results from six consecutive recalculations on the spreadsheet data and represent the six possible scenarios

Now imagine you are a researcher who believes that the disease might be caused high-speed trains. The dark diagonal line represents the railway track going through the country.

Have a look at the position of the dots (sick people) compared to the rail-tracks. If you wanted to see a clustering of disease close to the railway tracks you could probably do so in several of the charts. Yet the data has been generated randomly.

I didn’t have to do many more recalculations before the following pattern emerged:

For people predisposed to believing what they want to believe, this graph is presenting them with a pattern they will interpret as conclusive evidence of the effect.

The problem is that if you are dealing with only four pieces of data then there is a good chance that they will often cluster around any given shape. The negative results such as seen in Graph C are easily dismissed and researchers concentrate on the patterns they want to see.

Now think about the following business propositions:

- A business receives very few complaints about its level of service, but in one year all complaints relate to one branch. Does that indicate that the branch is performing poorly or is it just an artefact of chance?

- In a year a business tenders for 1000 contracts but only three are won – all by the same sales team. Does that really mean that that sales team is fantastic or is it again simply the result of chance?

The processing and presentation of data

Averages

Almost certainly when you use the term ‘average’ you are referring to the arithmetic mean. This is calculated by adding up all results and dividing by the number of results. So, for example:

Person | Height (cm) |

|---|---|

A | 175 |

B | 179 |

C | 185 |

D | 179 |

E | 176 |

Total | 894 |

So the arithmetic mean of these 5 people is 894/5 = 178.8 and this feels as though it is a natural way to describe an important measurement about the data. However, as we will see below, it can lead you astray.

The arithmetic mean is one measure of the data’s location. The other common measures are:

Mode: the most commonly occurring value. In the table above, the mode is 179. This measure would be more useful to you than the mean if you were a mobile phone manufacturer and needed to know customer preferences for phones of 8, 16, 32 or 64GB. You need to know the most popular.

Median: this is the value of the middle ranking item. So, for the data above arrange it in ascending order of height and find the height of the person at the mid-point.

Person | Height (cm) |

|---|---|

A | 175 |

E | 176 |

B | 179 |

D | 179 |

C | 185 |

So, the height of the mid-ranking person is 179 and this is the median

Unless the distribution of the data is completely symmetrical, the mean, mode and median will generally not have the same values. In particular, the arithmetic mean can be distorted by extreme values that give rise to its misinterpretation.

To demonstrate this we will initially set up a theoretical symmetrical distribution of the annual income of a population:

Number of people (000) | 10 | 20 | 30 | 40 | 50 | 40 | 30 | 20 | 10 |

Annual income $ 000 | 15 | 25 | 35 | 45 | 55 | 65 | 75 | 85 | 95 |

The mean, median and mode are all $55,000. If you earned that you would feel that you were on ‘average’ pay with as many people earning more than you as less than you.

Now let’s say that into this population comes the founder of a hi-tech internet company called Martin Gutenburg who invented a social medium service. Mr Gutenburg has a very high income – $10m/year. The salary distribution now looks like:

| Number of people (000) | 10 | 20 | 30 | 40 | 50 | 40 | 30 | 20 | 10 | M Gutenburg | 1 |

|

Annual | 15 | 25 | 35 | 45 | 55 | 65 | 75 | 85 | 95 |

| 10000 |

The arithmetic mean of this distribution is $55,400, so now earning only $55,000 you feel that you are earning less than average. In fact over 50% of the population is earning less than ‘average’ – something that at first glance would seem impossible.

This distortion could allow a government to claim that people are now better off because average earnings are higher. In fact, even if all the salary bands were reduced by 5%, the arithmetic mean including Gutenburg would be around $55,380. So the government could claim that on average the population is better off when, in fact, almost everyone is worse off.

In situations where the data is not symmetrical, the median value will often provide a more useful measure. The inclusion of Gutenburg does not change the median value and if everyone’s income fell by 5%, so would the median.

Correlation

One of the most common misuses of data is to assume that good correlation between two sets of data (ie they move closely together) implies causation (that one causes the other). This is an immensely seductive fallacy and one that needs to be constantly fought against.

For example, consider this data set:

| Sales of smartphones in UK2 (m) |

|

|---|---|---|

2012 | 3.04 | 26.4 |

2013 | 3.21 | 33.2 |

2014 | 3.33 | 36.4 |

2015 | 3.45 | 39.4 |

1 Diabetes UK

2 Statista/eMarketer

On a graph the data looks like:

The two sets of data follow one another closely and indeed the coefficient of correlation between the variables is 0.99, meaning very close association.

It is unlikely that any of you believe that owning a smart phone causes diabetes or vice versa and you will easily prefer to believe that the high correlation is spurious. However, with other sets of data showing with high correlation it is easier to assume that there is causation. For example:

- Use of MMR vaccines and incidence of autism. Almost no doctors now accept there is any causal connection. In addition the whole study was later discredited and the doctor responsible was struck off the UK medical resister.

- Cigarette smoking and lung cancer. A causal effect is well-established, but it took more than correlation to do so.

- Concentration of CO₂ in the atmosphere and average global temperatures. Not universally accepted (but increasingly accepted).

Graphs and pictograms

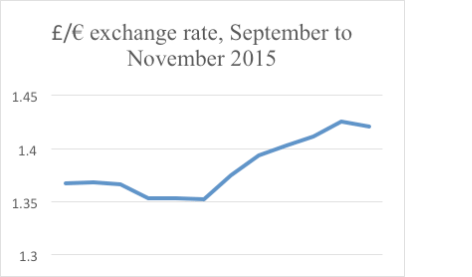

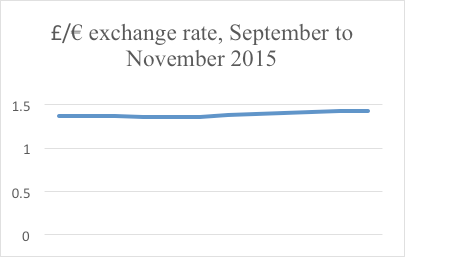

Here’s a graph of the £/€ exchange rate for September to October 2015. It seems to be quite a rollercoaster:

However, the effect has been magnified because the y axis starts at 1.3, not 0. The whole graph only stretches from 1.3 to 1.44. If the graph is redrawn starting the y axis at 0, then the graph will look a follows:

Not nearly so dramatic.

Note that a board of directors that wants to accentuate profit changes could easily make small increases look dramatic, simply by starting the y axis at a high value.

Pictograms are often used to make numerical results more striking and interesting. Look at the following set of results:

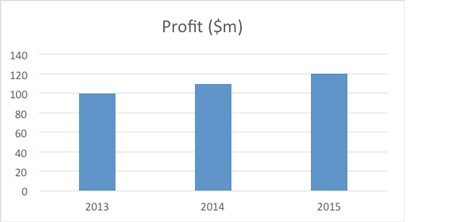

Year | Profit ($m) |

|---|---|

2013 | 100 |

2014 | 110 |

2015 | 120 |

The increase has been a relatively modest 10% per year and on a bar chart would appear as:

A pictogram could show this as:

Look at the first and last bag of money and think about how much you could fit into each. I would suggest the capacity of the third one looks at least 50% greater than the first one. That’s because the linear dimensions have increased by 20%, but that means that the capacity has increased by 1.23 = 1.73, flattering the results.

So it is important to consider how data is collected, processed and presented as it can be used to indicate that performance of an organisation is better or worse than it actually is.

Ken Garrett is a freelance lecturer and writer