Policy and insights report

Explainable AI systems are key for driving enterprise adoption at scale.

AI in accountancy isn’t fully autonomous, nor is it a fantasy. The middle path of augmenting, rather than replacing a human, works best when the human understands what the AI is doing – which needs explainability.

Explainable AI (XAI) emphasises the role of the algorithm not just for providing an output, but for also sharing with the user, supporting information on how it reached that conclusion. XAI approaches aim to shine a light on the algorithm’s inner workings and/or to reveal some insight on what factors influenced its output.

Furthermore, the idea is for this information to be available in a human-readable way, rather than being hidden within code. The purpose of this report is to address explainability from the perspective of accountancy and finance practitioners.

Explainability can improve the ability to assess vendor solutions in the market, enhance value capture from AI that’s already in use, boost ROI from AI investments and augment audit and assurance capabilities where data is managed using AI tools. The report also includes some examples for market practitioners to illustrate current developments.

Key messages for practitioners:

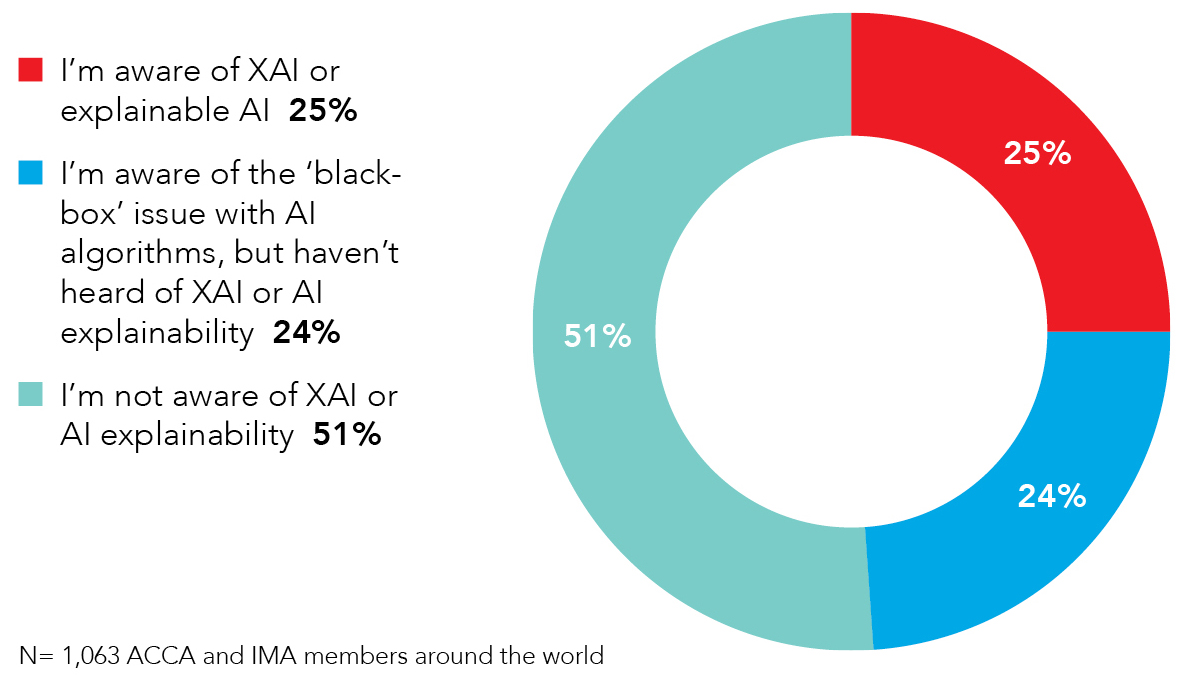

- Maintain awareness of evolving trends in AI: 51% of respondents were unaware of XAI, which impairs the ability to engage. This report sets out some of the key developments in this emerging area to raise awareness.

- Beware of oversimplified narratives: In accountancy, AI isn’t fully autonomous, but nor is it a complete fantasy. The middle path of augmenting, as opposed to replacing, the human works best when the human understands what the AI is doing; which needs explainability.

- Embed explainability into enterprise adoption: Consider the level of explainability needed, and how it can help with model performance, ethical use and legal compliance.

Policy makers, for instance in government or at regulators, frequently hear the developer/supplier perspective from the AI industry. This report can complement that with a view from the user/demand side, so that policy can incorporate consumer needs.

Key messages for policy makers:

- Explainability empowers consumers and regulators: improved explainability reduces the deep asymmetry between experts who understand AI and the wider public. And for regulators, it can help reduce systemic risk if there is a better understanding of factors influencing algorithms that are being increasingly deployed across the marketplace.

- Emphasise explainability as a design principle: An environment that balances innovation and regulation can be achieved by supporting industry to continue, indeed redouble, its efforts to include explainability as a core feature in product development.

Policy and insights report

If you've enjoyed this article, could you recommend it through your social networks?

Learning outcomes to include:

· The meaning of explainability in the context of AI

· Why it matters for accountancy and finance professionals

· Examples of how practitioners are using/developing XAI products